1. Introduction

1.1 Background and Motivation

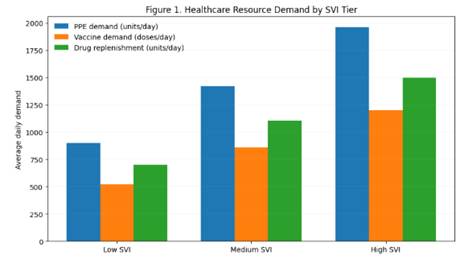

Supply chains in the healthcare sector significantly influence the health of populations through the management of essential health resources, which include medication, personal protective equipment, vaccines, and diagnostic services, among others. Inequity in healthcare supply chains has been noted, especially in emergency public health crises, where the scarcity of resources disproportionately impacts low-income, rural, and vulnerable populations (Dasgupta et al., 2020; Jean-Jacques & Bauchner, 2021). Inequity in healthcare supply chains is, therefore, not limited to the availability of resources but is also linked to the manner in which decisions regarding the management of the resources are made, with a focus on efficiency but without a conscious thought about the implications of the decisions on the health of the population in the end.

Over the past few years, various healthcare organizations and public agencies have started to employ machine learning (ML) and optimization models to enhance the efficiency of demand forecasting and logistics (Ivanov & Dolgui, 2020). However, the decisions made by the ML models and optimization models are often opaque and may fail to ensure fairness. This may lead to the perpetuation of existing inequities and the underserved populations being allocated fewer resources (Obermeyer et al., 2019).

1.2 Limitations of Existing Approaches

Similarly, previous studies on the optimization of the healthcare SC had primarily considered cost minimization, service maximization, and SC resilience under disruption (Choi, 2021). Meanwhile, the emerging domain in AI fairness and XAI has shown the capability to audit ML models using techniques like SHAP values and LIME (Ribeiro et al., 2016; Lundberg & Lee, 2017). These two domains lack strong interconnectivity.

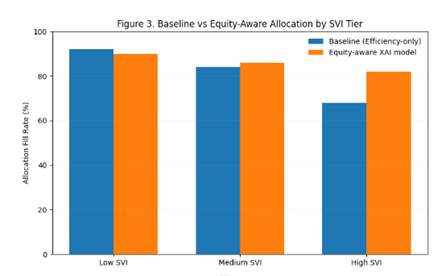

In the vast majority of current research on the topic of fairness in healthcare analytics, the analysis only goes so far as to detect bias, make performance comparisons, etc., but provides little insight into how the detected inequalities can be addressed in the system. On the other hand, supply chain optimization models seldom include the concept of fairness in the model, choosing to treat it as an external constraint rather than an objective decision factor.

1.3 Explainable AI for Equity-Aware Supply Chains

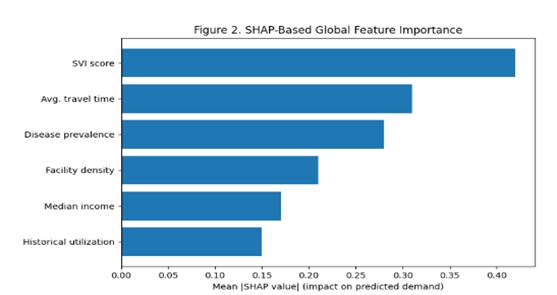

Explainable AI provides an opportunity for a powerful tool to address this issue by making the logic of a predictive model transparent to a decision-maker. Feature attribution, for example, can be used to explain how socioeconomic characteristics, geographic distance, or utilization patterns can affect allocation for a particular group (Doshi-Velez & Kim, 2017). XAI can be used in healthcare logistics not only as a diagnostic tool, but also a tool for auditing policies by revealing inequity.

Expanding on this understanding, fairness-aware machine learning approaches such as reweighted loss functions, group-based constraints, and disparity penalties provide a means for prediction models to consider equity-related factors (Mehrabi et al., 2021). While prediction-based fairness is important, it is not sufficient without resource allocation.

1.4 Proposed Framework and Contributions

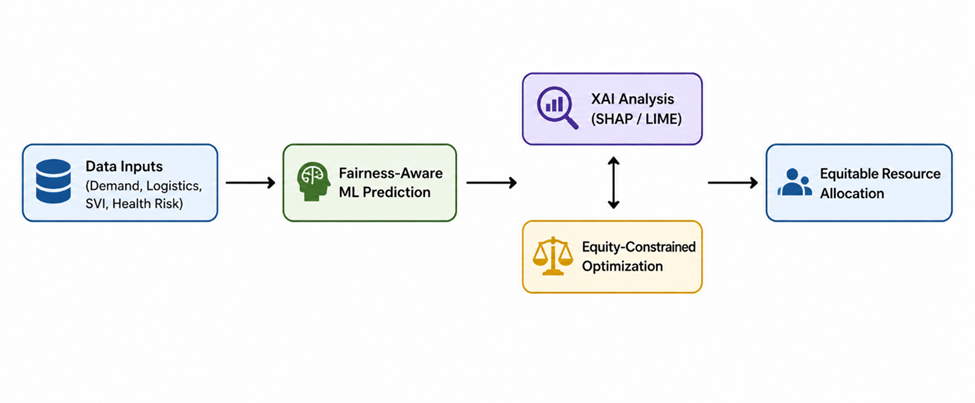

In this paper, an Explainable and Fairness-Constrained AI framework is proposed, which integrates predictive modeling, interpretability, and optimization to solve the issue of health disparities in the healthcare supply chain. The framework has three integrated components:

fairness-aware ML models for demand and risk prediction;

Use of XAI-based bias diagnosis, which utilizes SHAP and LIME techniques for identifying the causes of unequal outcomes, and an equity-aware optimization model that takes into account fairness constraints in the allocation process, considering the practical constraints of the actual budget and capacity.

By directly integrating outputs of explanation in optimization constraints, it allows decision-makers to make clear trade-offs between efficiency and fairness. This framework is aligned with federal U.S. health priorities, as established in Healthy People 2030 and the HHS Equity Action Plan, which focus on accountability, assessing health and health care equity using data, and addressing health disparities (U.S. Department of Health and Human Services, 2021).

The novel contribution of this research lies in its methodological integration of XAI and supply chain optimization, which transcends fairness analysis and enters the terrain of operationalized equity enforcement. This research contributes to both the technical discussion of AI-infused logistical research and the policy discussion of equitable healthcare delivery.